Posts Tagged clustering

A quick overview of machine learning techniques

Posted by Howard Yeend in machine learning on November 27, 2010

Machine learning is a fascinating discipline. Often inspired by natural processes, it can produce astounding results in a wide range of applications. Modern web search is underpinned by ML techniques such as clustering and statistical text processing. Computer games make use of evolutionary algorithms to produce better artificial enemies. Your camera probably has face detection in it for aiding auto-focus. Machine learning is key to making our technology better and our lives easier.

Machine learning is a fascinating discipline. Often inspired by natural processes, it can produce astounding results in a wide range of applications. Modern web search is underpinned by ML techniques such as clustering and statistical text processing. Computer games make use of evolutionary algorithms to produce better artificial enemies. Your camera probably has face detection in it for aiding auto-focus. Machine learning is key to making our technology better and our lives easier.

Today I’m going to give a very brief and incomplete overview of machine learning technologies and applications. There are three broad types of machine learning: Categorisation, Optimisation and Prediction.

The K-Means Clustering Machine Learning Algorithm

Posted by Howard Yeend in machine learning on January 24, 2010

The k-means clustering algorithm is one of the simplest unsupervised machine learning algorithms, which can be used to automatically recognise groups of similar points in data without any human intervention or training.

The first step is to represent the data you want to group as points in an n-dimensional space, where n is the number of attributes the data have. For simplicity let’s assume we just want to group the ages of visitors to a website – a one-dimensional space. Let’s assume the set of ages is as follows:

{15,15,16,19,19,20,20,21,22,28,35,40,41,42,43,44,60,61,65}

Now there are a number of ways we could separate these points out; one obvious way that springs to mind is simply to iterate over the set and find the largest gap between adjacent ages. For some sets this can work quite nicely, but in our case it would give us quite unbalanced groups; 15-44 and 60-65, the latter containing just 3 points, and the former being far too broadly distributed.

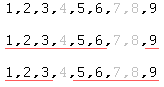

Using k-means clustering we can obtain more tightly defined groups; consider the set {1,2,3,5,6,9} – using the simplistic greatest distance technique we gain two sets {1,2,3,5,6} and {9}, while the k-means algorithm produces a grouping of {1,2,3} and {5,6,9} – more cohesive clusters with less dispersion of points inside the groups – k-means tries to minimise the sum of squares within a cluster:

Notice how in the first set of clusters the outermost points of the first cluster are quite far away from the middle of the cluster, while in the second set, the points in both clusters are closer the center of their groups; making these clusters more well-defined, less sparsely populated.

Now let’s have a look at the algorithm and work through the example age data to see if we can get a tighter grouping than 15-44 and 60-65 using clustering:

Recent Comments